Accelerating Scale up with Transfer Learning and DataHowLab

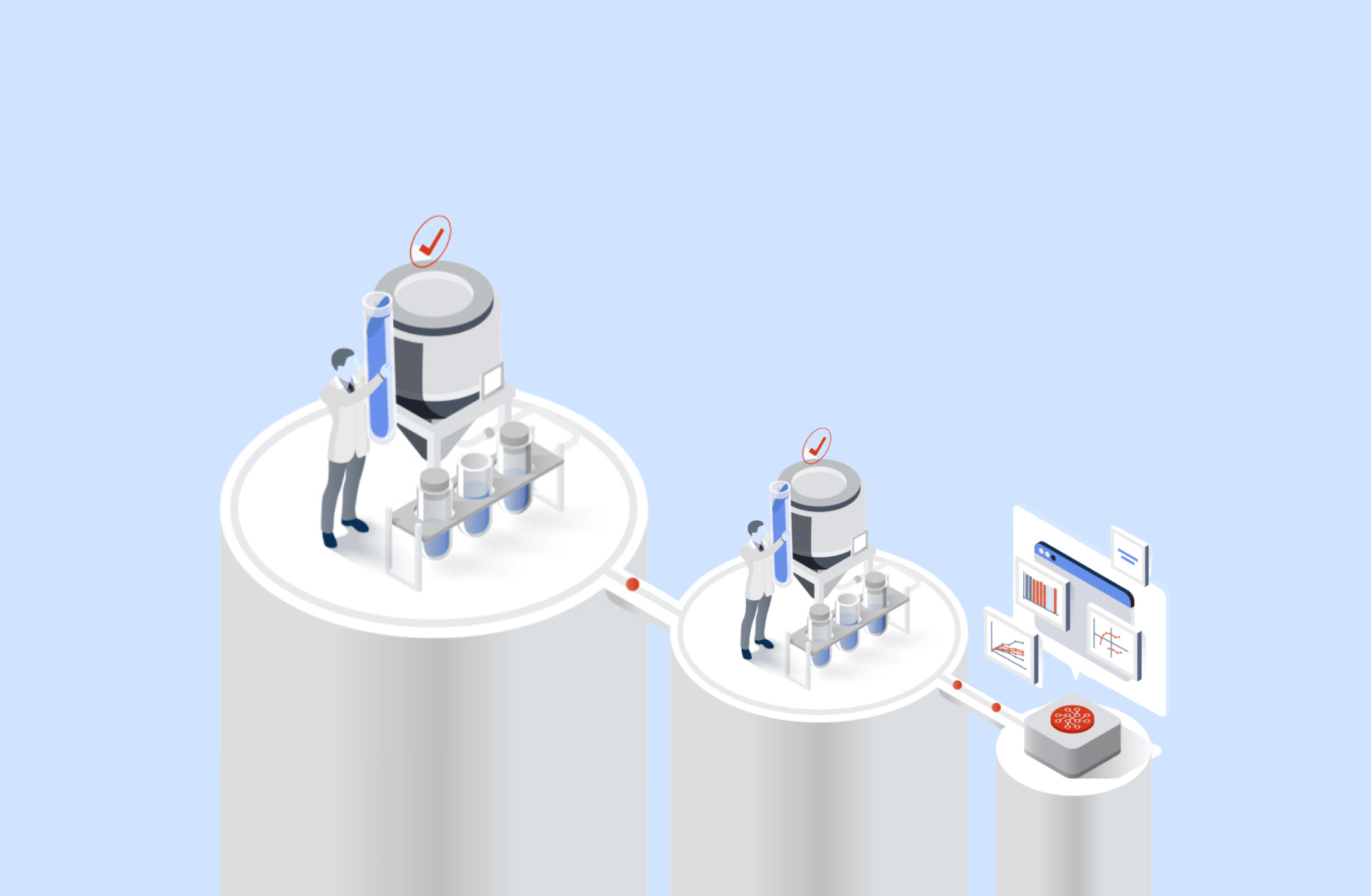

Bioprocess scale-up is complex, as biological systems often respond unpredictably to the changes in volume, mixing, and transfer rates etc. Despite existing knowledge at small scale, scientists still rely on extensive experimentation during scale up – adding cost and time to projects. AI-based transfer learning from small to large scale offers a new approach.

Assessing the impact of DataHowLab’s hybrid models on process scale up

Existing modeling approaches typically struggle with scale-up, requiring a high volume of large-scale experiments despite teams having already generated extensive small-scale process knowledge.

DataHow’s hybrid models have a powerful ability to generalize – to use data from low-cost small-scale systems and transfer knowledge across scales to accurately simulate outcomes at large scale – creating an entirely new way to navigate the scale-up challenge for development scientists.

This advantage comes from the structure of hybrid models. They combine mechanistic scientific knowledge— e.g. mass balances —with machine learning models. The mechanistic backbone provides foundational knowledge grounded in known scientific principles, allowing the model to account for scale-dependent effects and apply process knowledge that holds across scales.

These key capabilities were assessed by Wheeler Bio, a US-based CDMO, who asked DataHow to support it with cross-scale modeling, to learn larger scale behaviour with as few large-scale runs as possible:

OBJ 1: Demonstrate the ability of DataHowLab’s hybrid models to transfer knowledge across scales

OBJ 2: Benchmark DataHowLab’s hybrid models against multiple linear regression models (MLR) – the industry standard – for the scale-up challenge.

OBJ 3: Assess how DataHowLab support operational excellence by reducing the impact of issues or deviations manufacturing processes.

Key Results

DataHowLab, its technologies, and transfer learning capabilities, offer scientists a more time and cost-effective approach to scale up. Small-scale data and insights become a key asset, rather than a stage gate, generating process knowledge despite scale differences. For the full results with supporting plots, download the full case study below:

OBJ 1: 10L scale was accurately predicted using available Ambr15 data.

OBJ 2: Hybrid models significantly outperformed MLR models (and industry standard) when trying to understand large-scale from small scale data

OBJ 3: The model trained with small and large scale date was able to recommend and simulate a mitigation strategy to return final titer to target after a feeding error.

Should you wish to further explore the case with the DataHow team, please contact us directly.